What is ALM?

As always, it’s an acronym. ALM means Application Lifecycle Management and many texts about ALM are available; white papers, articles, blogs, how-tos… But in my subjective it means:

Keep track of everything we need.

/Mr. Rapp

Don’t track things we can generate.

Generate everything automagically.

What is ALM for the general population in the Power Platform area?

You may think:

Why should I know ALM, I sortof know it anyway, but me and everyone can just export a solution, import that solution – tada!

Actually, skip that too. It’s possible to fix a bug directly in the production environment! Why shouldn’t I when I can?

/NOT Mr. Rapp

Application Lifecycle Management 🛞

If you just Google Bing it, it will show you a lot of circles.

It’s going round and round and never stops…

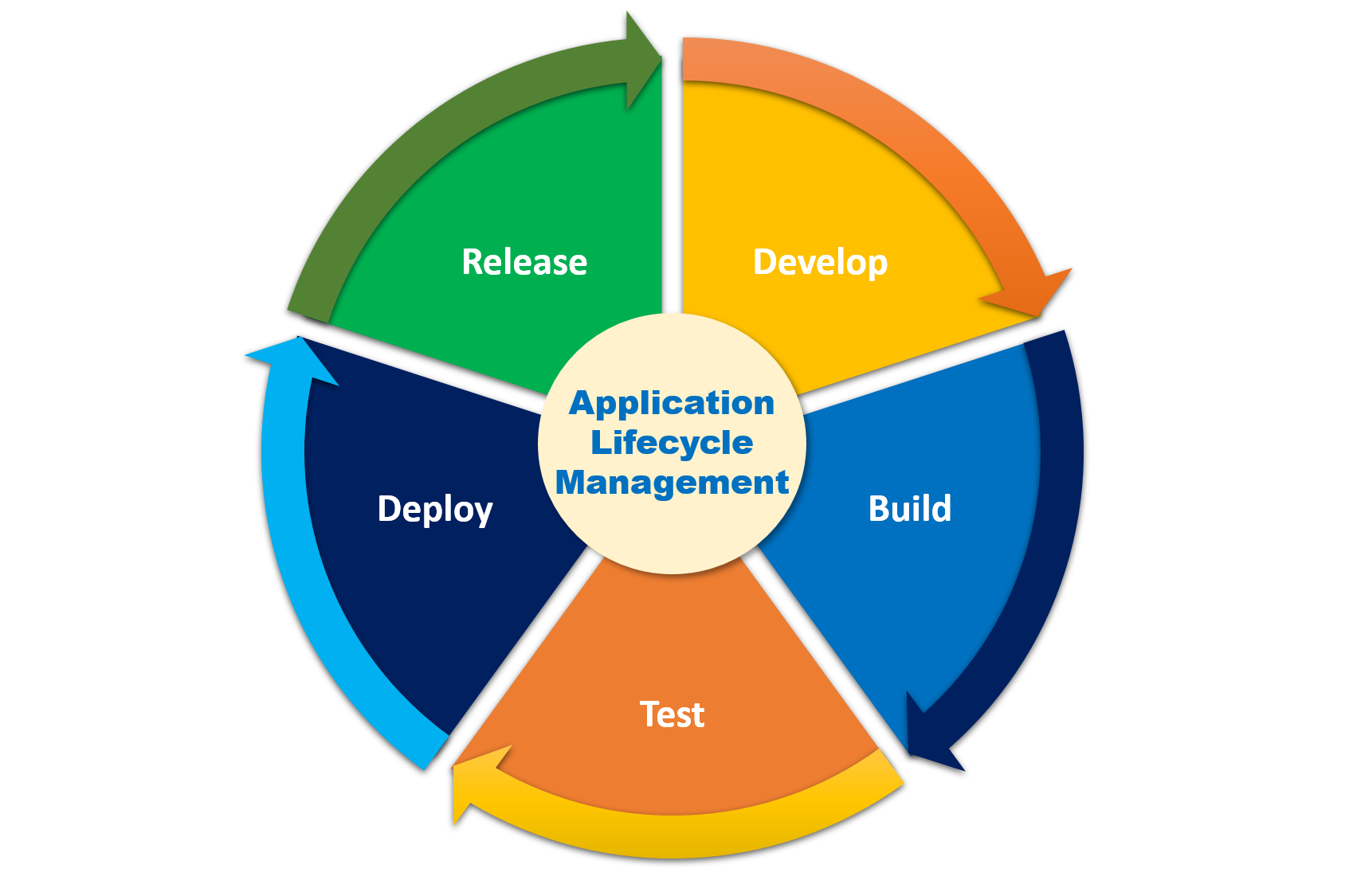

My focus is probably only a part of it, so I focus on these five slices:

🧑💻Develop – 👷♀️Build – 🧪Test – 🚚Deploy – 📢Release

It’s actually in one of the images above:

This is “my ALM“, and it goes round and round and gets back around again – forever…

I’ve never been in a project where we just go from A to Z, and then we’re done. Are you? I guess not. So…

We iterate.

The Pipeline is very related to ALM, but…

…I’m not talking about “Pipelines in Power Platform“ recently released by Microsoft, even if there’s a lot of buzz these days (see Carsten Groth series 1st 2nd 3rd 4th 5th, XrmToolCast, Aric Levin, Temmy Raharjo, Reza Dorrani, etc., etc…)

These new features are totally awesome for makers and admins, to get more control and to share it in control methods.

If you are working more with a full system based on Power Platform, being the core at the company. Maybe you’re a “pro-coder”, might be called “code-first”, but anyway – read on here!

This is not about automated pipelines – we’re talking about knowing all the details of the Pipeline, a bit more hardcore. They may be a tiny difficult to set it up, but when that’s done – I just love it.

We all should love a proper pipeline!

/J. Rapp

Power Platform Solutions 😍

It is wonderful that it is sooo easy to do all things with Solutions!

But it’s sooo easy to do it in the wrong way…

You really need to know about Solutions – if we are in the Power Platform area. There is nothing “I’ll read it during my vacation, one day” – just do it now.

I’ve been talking and writing (e.g. Case closed: Managed or Unmanaged solutions) about why you should use Managed solutions for a long time. Scott Durow wrote (Pets vs. Cattle – How to manage your Power App Environments) similarly; but stayed away from the discussion.

You should probably start by reading Microsoft Learn – Solutions overview. Please do. 🙏 Then you continue here. Ok?

Untangling Solutions

Too many times, I have got in projects trying to untangle dependencies to finally find what their system is really what they actually need.

Why is that? How can it be too easy to tangle up the solutions?

I’d like to say:

Without a proper ALM, you are asking for problems.

Without a proper ALM, you get two risks: Unmanaged Solutions and trying Inconsistent Products compared to your environments.

Unmanaged Solutions

Used too much to create a new solution might be a “Sprint 5 Solution” or a “Jonas Quick Fixes Solution” and when it is fixed/done, imported to Test and Prod as an unmanaged solution. Sure, we can remove that solution to “clean it up”, but the components in that solution can never be removed by removing their solution. Unmanaged solutions only contain points to the components, but they are owned by the Default solution. And the Default solution can not be removed. Period.

Inconsistent Installs

A classic problem is installing a new product in Dev or Test, just to test it, and then you can’t import your solution in the Prod. Now you have created – probably without even knowing it – dependencies between the tested product and your own solution.

🧑💻 The Develop slice

The development covers: setting up the data model, views, forms, canvas apps, flows, and more techy stuffs; javascript, typescript, and C#. So we shall not be narrow to only look at low/no-code technology. Though also not only “the code” either. We just talk about everything…

Commit e v e r y t h i n g ✅

You should survive even if all environments blow up badly and you have nothing left. You should be able to recreate both DEV, TEST, and PROD from the source control.

The committed files are the Source Of Reality.

Notice the difference between “pets” and “cattle“. These environments are not pets. They are cattle. Period.

There are interesting discussions on the difference between pets and cattle – here on StackExchange, and here from cloudscaling.

Even if nothing has been blown up for you, creating a new branch development environment should be easy!

So the development environment is where it is designed and built – but it’s not the Single Source Of Truth.

Please no! Don’t commit ALL files 😬

It is quite common to see all files are committed to source control… *sigh*

So you probably did it just to be safe, so you didn’t miss some small unimportant (you think) file you might skip, so okay, just commit all the files on my local hard disk.

Why shouldn’t you?

A few examples of reasons:

- It makes it harder to find the code you need

- It can be stuck at old versions when you should have updated

- It gets someone else’s flavor of Visual Studio

- It wastes disks (probably not for you, but for “the cloud”)

Use file .gitignore!

.gitignore tells git which files we shall – surprise – ignore. If a file is updated or created, it won’t add it to the list of changes. See docs here: Microsoft Learn, GitHub Docs, git Documentation.

The easiest is to create that file in the root folder in your repository and get great samples from GitHub: https://github.com/github/gitignore

Since we are all often working with C# and similar, you can go directly to this .gitignore sample: https://github.com/github/gitignore/blob/main/VisualStudio.gitignore

Copy, Paste, and now you’re happier than you were.

Remove ignored files

Note that those already committed are not removed, so you have to do a bit of work to remove them from the repo.

The sample .gitignore file currently holds almost 400 lines, and that’s boring to go through. But just looking at the first 40 lines (most lines are also empty och comments, relax!) does what we need. These are my highlights:

- Source Control folders:

$tf .vs - Generated files in folders:

packages bin obj logs - Personal settings files:

*.suo *.vspscc *.user *.pfx

Start using .gitignore

If you already have a bunch of unnecessary files committed, it is actually quite easy to get rid of them.

Read this article from StackOverflow: Apply .gitignore on an existing repository already tracking large number of files.

Develop Testing

When we are developing, we have to test a bit instead of just dry swimming (that’s at least a Swedish expression).

Adjusting the forms is quite obvious to try the looks and feels works fine, as well as for views, dashboards, etc.

For plugins, we upload them to the DEV environment and can test them hands-on. We probably test in “Debug-mode” compiled plugins and should have Tracing settings to save all. That helps us a lot…

Unit Tests is what we also need… I like it, I know it is needed, and I really suck at it. I know I know, I should. I will. One day… #promise We have magic help from Daryl LaBar‘s XrmTestUnit and even better from Jordi Montaña‘s Fake Xrm Easy. But that’s not all…

Develop Environment

The development environment – “The DEV” – is the only one with unmanaged solutions. Why is that? Because this is the one where we “develop” it.

Only touch The DEV!

Some companies use individual DEV environments – one for each and every developer. Then you separate all personnel in the team and check in changes to the source control system, even for fixing view layouts, adding columns, and so on.

This is a great concept, but it also adds a lot to the administration, and basically – it takes time.

This individual DEV concept may be useful for huge systems, but – like for me in all my projects so far – we share one DEV environment for small/medium/half-large companies.

There is a concept in between – having different DEV environments for each sprint development. Usually, we create a new DEV when starting the next sprint, and at the end of that sprint, we merge it together with the main branch. The main branch may only be in the source code and may also have an actual environment.

👷♀️ The Build slice

Pipelines

After the Develop slice, we start using the Azure DevOps Pipelines 🥳 (Note that you may use GitHub Actions as well.)

I’ve been working with pipelines for a long time, and I love it. I started with WISE in the ’90s, and then I used the Pipelines in Azure DevOps. Back in the day, only tools from the community existed, and even I created a few tools that we just needed. Now there are Build Tools for the pipelines related to Power Platform. The best community available is created by Wael Hamze – read it, use it when needed!

Something bad happened a few years ago… MS says that we are going roughly 30 years back in the technology. Stop with a decent UI, and go back to simple texts. Actually worse than before – now everything is broken with a small <space>. This is the idea of YAML.

I will only say this once, but…

I hate YAML.

/Jonas A. Rapp

Yes, I truly do.

Now move on, we like pipelines anyway. But read the docs of YAML, and you’ll love it too. Not.

Purpose of the Build slice

Two main things to do here: pre-testing and getting an artifact.

Pre-testing (=unit testing) should be written in the Test slice, but since we are using a phenomenal pipeline, unit tests will be executed during the Build.

Artifact means the result of the build, what we bring to the next stage – Deploy slice. An Artifact can contain a compiled exe-file, a dll-file, a full installation, or a managed Power Platform solution.

Pipeline Structure

You know – everyone is special. We look at ourselves as specials – and everyone else is only a general classic style.

The general always creates pipelines like:

- Pull all source code

- Compile it

- Run tests

- Put the exe’s and dll’s in a drop folder in the artifact

What we do – we at CRMK company and similar competitors – is a bit more complicated…

- Pull all source code

- Compile it

- Run tests

- Update plugins and javascript in the DEV/BUILD environment solution(s)

- Export unmanaged solutions for testing

- Run Microsoft’s Power Platform Checker on all solutions

- Update versions of the C# assemblies and commit version files

- Update plugins etc. in the build environment with new versions

- Update the version of the solutions

- Export managed solutions

- Export unmanaged solutions

- Unpack the unmanaged solutions

- Commit and push the unpacked files

- Put the managed solutions in a drop folder in the artifact

Commit what?

Why don’t we commit the exported managed solutions? We all know I love Managed…

Well, Managed Solutions are created or built in the Power Platform, and it is truly generated during the pipeline.

They are artifacts to be used later during the Deploy slice.

Now don’t commit files that are generated.

/JR

Build Environment

In most of my projects, we just use the existing DEV environment to build from it.

To be even more proper and use the way Microsoft Healthy ALM suggests, we spin up a new BUILD environment for each build pipeline that is started. The BUILD will be a temporary environment and will be dropped as the pipeline is done.

Why would you use BUILD?

We will produce the artifact – the managed solution – from this “clean” environment. It should be the same as producing it from the DEV, but there may come in gremlins in the DEV environment over time… The BUILD won’t have any gremlins that are spread to other environments…

🧪 The Test slice

The Test isn’t really a separate slice. Instead, it is included in both Develop and Build.

There are two types of tests:

The first ones we can automate are done during the Build pipeline. Both unit tests in C# and TypeScripts are run there as the Solution Checker from Microsoft.

The second one needs to be used manually, so technically, it is done after the Deploy pipeline to be able to have an updated environment with our solutions.

Note: you can test it in the DEV environment before even starting the pipeline – but you shouldn’t!!

Test Environment

Where do we test? Everywhere, of course…. but we focus on one or a few different environments.

There may be a simple TEST, there should be a UAT (User Acceptance Testing), there should probably be an SIT (System Integration Test), and there may be even more environments for different purposes.

Regarding how many testing environments we have, we can quite simply add more targets for the Deploy pipeline.

🚚 The Deploy slice

Deploying seems hard… but that part it’s really simple. Basically, it means “Start to use this new artifact.“

The general way of deployment pipeline is just:

- Install the exe-file. (or similar)

It is actually quite close to our Power Platform deploy pipelines, but a few more steps in it:

- Get solutions from the Artifact

- Import managed solutions

- If needed, apply solutions updated

- Apply setting file

- Update data

Deploy Environments

The Deploy environments are quite obvious – the environments for tests (TEST, SIT, UAT, etc.) and, finally, the goal we have to deploy to the Production environment, and the end users can now use our awesome features.

📢 The Release slice

This slice is mostly an administration task.

My personal thoughts are: this means we have created this build, tested it and accepted it, deployed it to the test environment (actually in the wrong order… but anyway), and now it’s ready for an official release with this version. But it’s more a statement than a technical issue.

The technical part can be to retain the created artifact.

Conclusion

This post is not an official statement; this is just what I think, what I like, and what I truly believe in. I have seen too many projects and maintaining systems that are all working too hard – too unsure, too much ´we’ll wing it‘ and too much crossing-fingers-lets-hope-it-works-today kinda attitude.

That really hurts me. Why are so many pros working too hard? I don’t know.

There may be better ideas than mine, of course, but this is what I like to share with you.

This is ALM a la Rapp.

Do you really want?

- Do you want to have a great system or to deliver a great system? If you have read this far, I’m sure you do. Thanks for that 🙏

- Do you want to be able to upgrade to even newer features, when they are available?

- Do you want to be able to – in the worst case – need to roll back to a previous release?

- Do you want to find out when who did what?

- Do you want to see how the system really works, instead of being lost in repositories?

I can go on forever with reasons… but now it’s time to finish it up.

Create proper pipelines, and use them.

Stop shoot from the hip manually.

Or maybe put like this:

Shoot the pets manually once and for all.

Shoot the cattles automatically,

around and around and around and again.

🧑💻Develop – 👷♀️Build – 🧪Test – 🚚Deploy – 📢Release

Thanks Jonas – ”I hate YAML too” – but I like your article. Thanks for writing it!

Beyond the unit test frameworks that you mentioned do you have any preferences for automated testing in the UI (EasyRepro / playwright / something else)?

To be clear, it was a bit too narrow to only mention unit tests, we do a bit more…

But also, we still use these types of tests like EasyRepro and integration tests. We will be better…

Would be great if you had the time to do video explaning the steps.

Also if you have an repo with your yaml files?

Thanks Jonas

Great idea, thanks! I might just do a video.

Regarding YAML (yuck!) we need to polish them a bit more. But we aim at publishing our template on GitHub. Stay tuned! 😊